Ragnarock: AI

Best Practices

What is Ragnarock?

Ragnarock is the framework for best practices we’ve developed through building generative AI solutions on AWS. It is a combination of several techniques, a common set of patterns, and an overall architecture we’ve found particularly efficient, accurate, and effective when building generative AI solutions. The name itself is derived from the combination of Retrieval-Augmented Generation, a Neoteric* Agent, and Amazon Bedrock (RAG-NA-rock).

Why Ragnarock?

How do you handle the inherent randomness of large language models when you need to build a reliable, accurate, and consistent solution?

Traditionally there have been two answers to this problem.

-

Avoid use cases which require highly deterministic behavior

-

Tolerate randomness and aim for consistency elsewhere in the system

Ragnarock is a third way—our collection of best practices for creating consistent and performant generative AI solutions.

Benefits of Ragnarock

- Cost Efficiency (Token Usage)

- Prompting Flexibility

- Latency (Time-to-Output)

- Throughput (Generations Per Second)

- Security

- Data Privacy

- Reliability (Request Timeout / Error Handling)

- Accuracy (Hallucination Rate)

- Safety

- Leverage Your Data

- Leverage Your Infrastructure

Project Fit Criteria

- Knowledge Management

- AI Assistants & Chatbots

- Customer Support

- Search & Research Tooling

- Keyword Extraction

- Concept-Specific Image Generation

- Document Processing & Creation

- Any Solution Needing Agent Capabilities

How Ragnarock Works

We rely on “semantic distillation,” a process for extracting and augmenting information while retaining meaning. We do this to the underlying data as well as the prompts, ensuring that the linkages between the two are embedded into the system and sufficiently generalized. This allows the AI to handle a wide variety of inputs while still arriving at the intended output.

To do this, we use a combination of

-

An Agent Orchestrator

-

Meta-Prompting

-

Chain-of-Thought

-

A Vector Database

-

Retrieval-Augmented Generation

-

Embedding

-

AWS Step Functions

-

Web Crawling (optional)

-

Long-Term Storage

-

Amazon Bedrock

With these elements and techniques, we tune and prepare a language model for its use case and simulate a large variety of prompting scenarios, testing and refining until we achieve the accuracy required of the solution.

Learn more about the technical details of this approach here.

Elements of the Solution

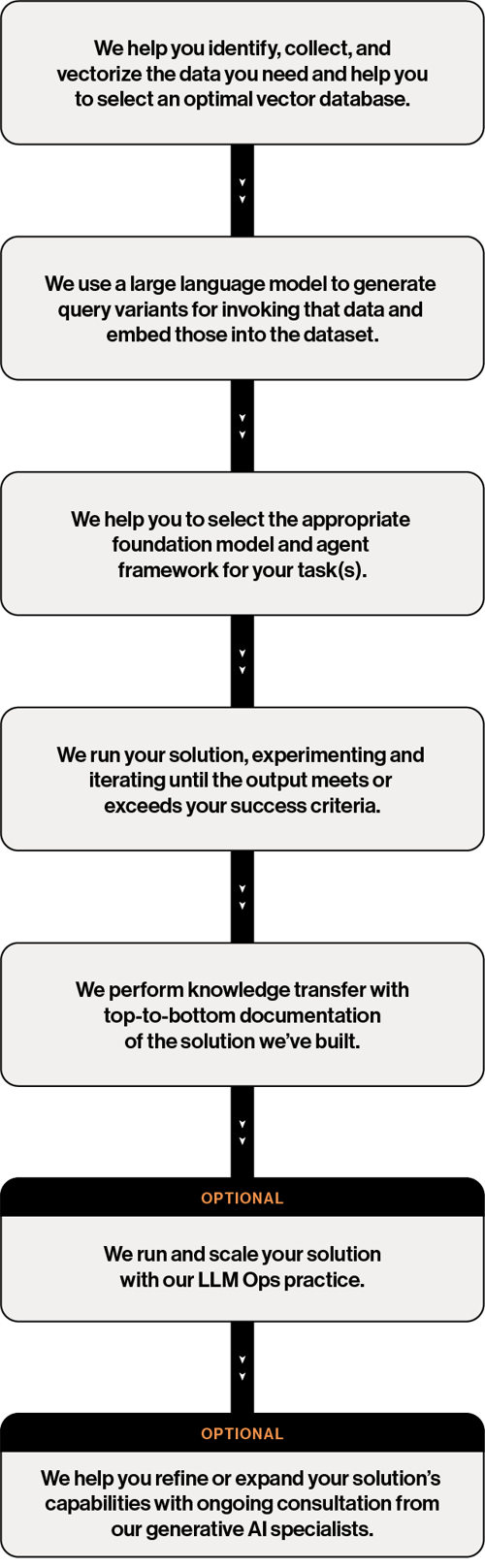

Our Process

Get Your Pilot Launched

Would you like to see how the Ragnarock best practices can impact the performance of your solution? Talk to one of our generative AI specialists today.