Containers are transforming enterprise IT. Gartner forecasts that by 2022, 75 percent of global organizations will have containerized applications running in production, compared to only 30 percent doing so today. There is one clear-cut reason for the increasing popularity of containers: they give companies more computing power at lower costs.

However, to realize the efficiency and cost-effectiveness of running containers on AWS, DevOps has to ensure that the application code is deployed as quickly as possible to meet the needs of the continuous integration/continuous deployment (CI/CD) pipeline. Containers are the key to helping companies develop and deploy cloud-native applications that scale seamlessly and efficiently in response to changes in demand.

How Containers Create More Efficient Apps

Containerization makes applications easier to manage by separating them into small packages of code that combine all app components in a single container, including configuration files, libraries, and dependencies. The process makes more efficient use of resources by allowing all containers to share a lightweight OS, while each container’s processes run in isolation.

By holding all of an application’s components and core data, containers serve as an abstraction of the software layer. This is the biggest difference between containers and virtual machines (VMs), which simulate a physical machine and thus are abstractions of the hardware layer. As a result, each VM has to run its own OS in addition to the application’s files, libraries, and dependencies. In addition, containers can be configured with only the needed runtime/config components, allowing them to be drastically smaller than a full server distribution- an Alpine linux container is just a few MB as opposed to the several hundred of an ubuntu based container.

When using traditional virtualization, each port must be converted to the host platform’s OS- so that an application deployed using the VMware hypervisor, for example, couldn’t be ported to a kernel-based VM (KVM) hypervisor without making significant changes to the code. By contrast, containerized apps can be moved from a desktop to AWS, Google, or Microsoft Azure cloud environments with little or no code updates.

Shortening the code-deployment cycle fosters innovation

The primary advantage of containers over traditional software development methods is the ability to run apps in many different environments without having to modify the code. By using containers, developers can create their apps by accessing small services via an API rather than relying on a siloed development environment. The microservices architecture allows small changes to apps to be pushed out immediately, without affecting any other microservices. In addition, developers can work locally confident that their code with work when ported to virtually any platform.

The adoption of containers for app deployment means the “death of the release,” according to Pete Hodgson on DevOps.com. Not many years ago, it was common for months or even years to pass between software updates. Today, updates to production apps occur by the second.

For code deployments in AWS environments, Amazon Elastic Container Service (ECS) includes both a traditional container runtime environment running on EC2 instances, as well as the Fargate launch type to run container services on “serverless” Docker containers where the hardware is abstracted and not managed by the user. To avoid downtime, Docker images are rebuilt and deloyed with a rolling update model. Since Docker containers are built as layers, each deployment can be very lightweight.

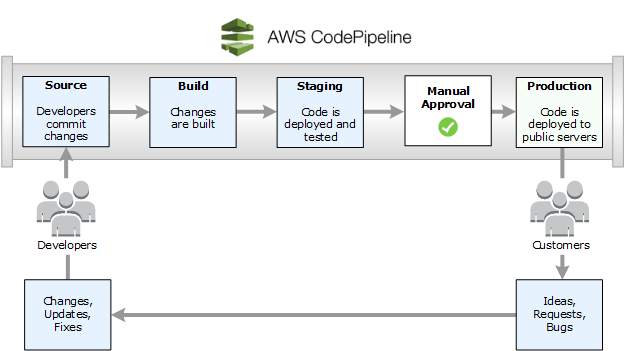

When running containers on AWS, code deployment can be automated by using AWS CodePipeline to detect the changes developers make to the source repository, build the changes, and run any tests that are required. Source: Amazon Web Services

Faster Code Deployments, More Efficient Developers

Companies are racing to adopt containerization for their applications because the technology has been shown to cut code deployment times and improve developer productivity. An example of a company realizing the benefits of running containers on AWS is Your Call Football, which makes an application that allows football fans to “call” their own plays and see actual players run the plays on the field. The app’s performance was bogging down whenever there was a burst of activity during gameplay, an activity pattern that mirrors the game of football itself.

The developers faced two significant performance challenges: traffic would spike in the three hours of a football game as 100,000 concurrent users played; and during gameplay, all users vote in the same 10-second window just prior to the actual football play being run on the field, which occurs about 100 times during each game.

To “tackle” these two problems, Mission, an AWS Premier Consulting Partner, worked with Cantina, the firm Your Call Football initially chose to provide its cloud infrastructure and game logic, to address the extreme traffic spikes. Mission used infrastructure-as-code to build out a backend for the application that featured a starting lineup of Kubernetes, Amazon Relational Database Service (RDS), and Amazon ElastiCache. The result was a faster, more efficient cloud environment for the app, and thousands of happy fans enjoying an enhanced game-time experience.

Improving reliability along with code-deployment performance

Autumn Games, which publishes Skullgirls and other popular cross-platform video games, needed to get better performance out of its AWS code-deployment pipeline. The company used Docker to deploy its application server software via a pipeline linked to the EC2 machine it relied on for development work. Concerns about the reliability and performance of its automated infrastructure led Autumn Games to look for a partner to help it improve the resiliency of its production pipeline and eliminate single points of failure.

AWS referred Autumn Games to Mission, an AWS Well-Architected Launch Partner, to assist the company as it migrated to a managed service environment to maximize the potential of AWS and achieve peak resilience and operations performance. Mission first conducted a Well-Architected Review and used the results to re-architect Autumn Games’ cloud infrastructure and applications. The new environment features a custom DNS failover for the company’s MongoDB stack that links to both AWS Lambda and Amazon Route 53.

Your Call Football and Autumn Games are only two of the companies that have realized the benefits of working with an experienced AWS partner such as Mission to devise a winning containerization strategy based on the container option that lets them deploy code quickly and efficiently. Mission’s technical knowledge and expertise help organizations of all types plan and implement the cloud infrastructure that best fits their unique needs.

-1.gif)